GPT-4, Stable Diffusion, and Beyond: How Generative AI Will Shape Human Society

In 2020, I wrote about GPT-3 model. Late last year, OpenAI released ChatGPT which was based on GPT-3 but trained using Reinforcement Learning from Human Feedback (RLHF). And now GPT-4 has been released. It has only been out for a few days, but it is already seeing incredible applications such as creating office documents, turning sketches into functional apps, creating personal tutors, and more.

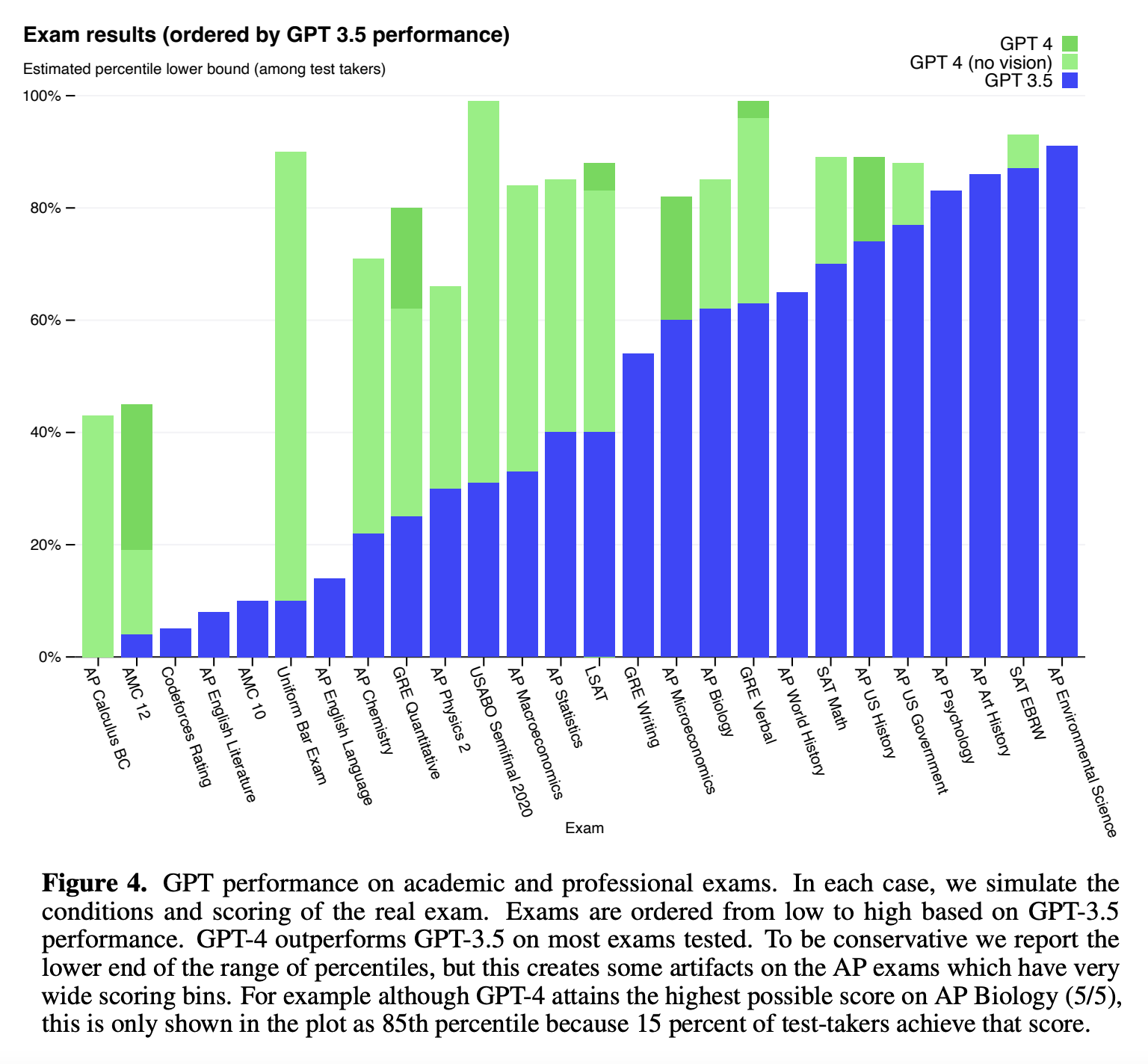

The newly released GPT-4 exhibits human-level performance on a variety of common and professional academic exams. Source:

OpenAI GPT-4 Technical Report

The newly released GPT-4 exhibits human-level performance on a variety of common and professional academic exams. Source:

OpenAI GPT-4 Technical Report