The Meritocratic Mirage

We demand fourteen years of academic toil, then liquidate it in a thirty-second glance. The board examination is not a test of merit—it is a test of compliance.

A collection of thoughts on engineering, AI, and systems.

We demand fourteen years of academic toil, then liquidate it in a thirty-second glance. The board examination is not a test of merit—it is a test of compliance.

Large Language Models (LLMs) are good at generating coherent text, but they have few inherent limitations:

There are two ways to address these limitations:

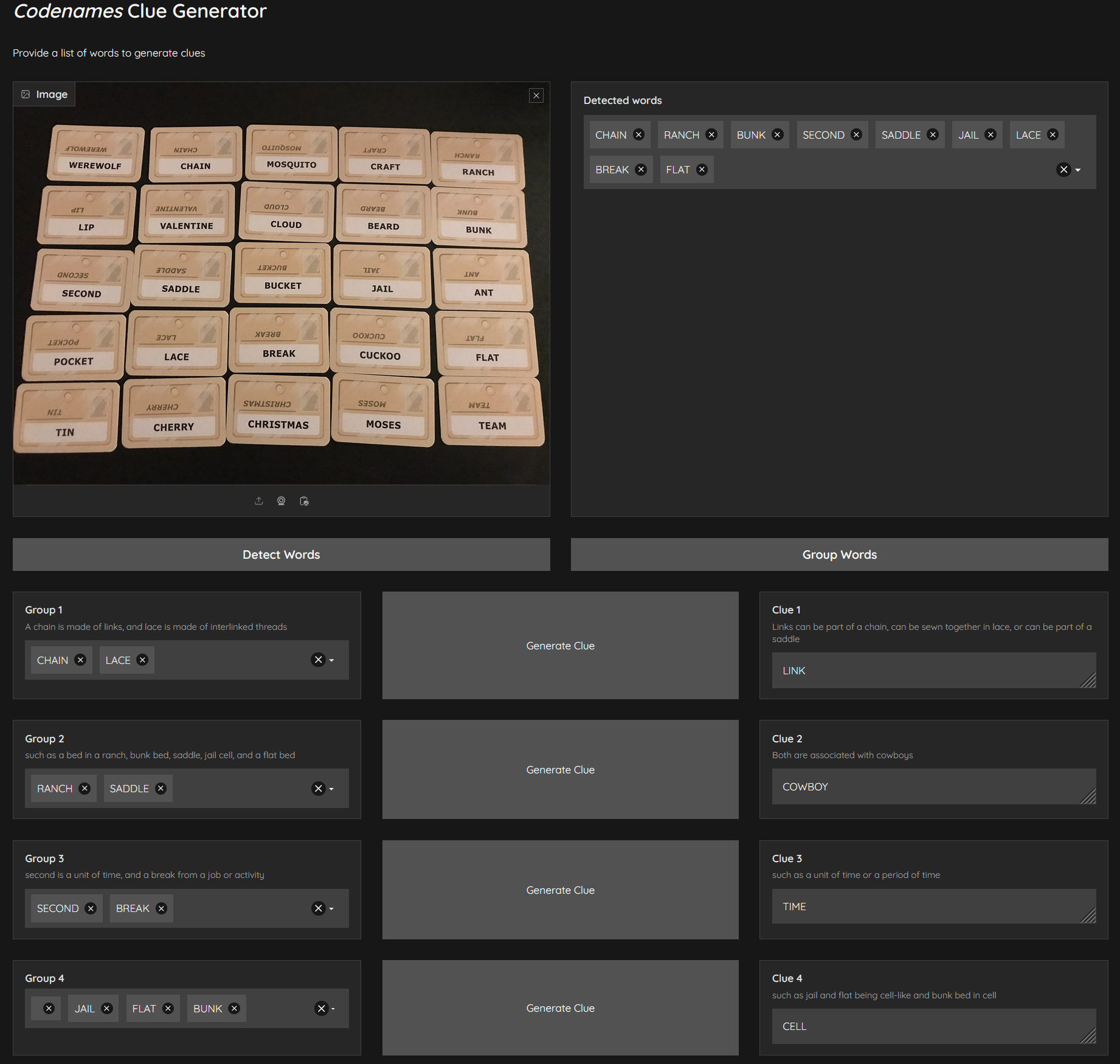

Codenames is a word association game where two teams guess secret words based on one-word clues. The game involves a 25-word grid, with each team identifying their words while avoiding the opposing team’s words and the “assassin” word.

I knew that word embeddings could be used to group words based on their semantic similarity. This seemed like a good way to cluster words on the board and generate clues. I was largely successful in getting this to work along with few surprises and learnings along the way.

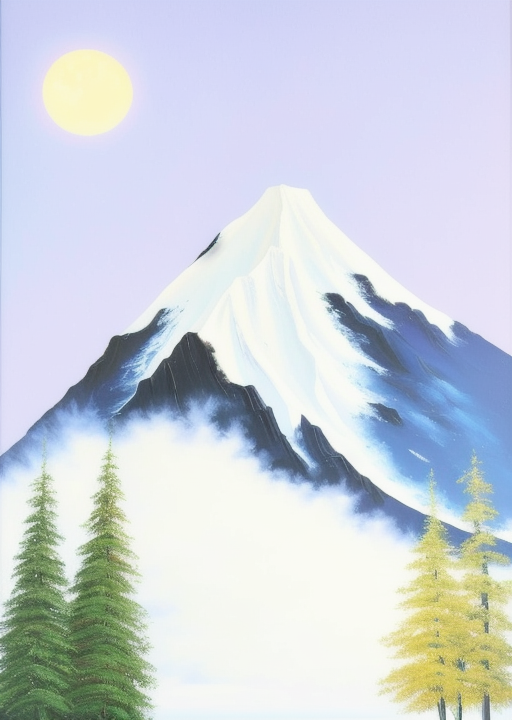

By now, many of us may be familiar with text-to-image models like Midjourney, DALL·E 3, StableDiffusion etc., Recently, I came across an interesting project called Visual Anagrams that utilizes text-to-image model to generate picture illusions. This project enables us to input two different text prompts, and the model generates pictures that match the prompts under various transformations, such as flips, rotations, or pixel permutations. Growing up, I had a nerdy fascination with illusions and ambigrams, so I was thrilled to give this a try.

While chatbots have grown common in applications like customer service, they have several shortcomings which disrupts user experience. Traditional chatbots rely on pattern matching and database lookups, which are ineffective when a user’s question deviates from what was expected. Responses may feel impersonal and fail to address the true intent when questions deviate slightly from pattern matching rules.

This is where large language models (LLMs) can provide value. LLMs are better equipped to handle out-of-scope questions due to their ability to understand context and previous exchanges. They can generate more personalized responses compared to typical rule-based chatbots. As such, chatbots represent a prime use case for generative AI in enterprises.

When it comes to creating artwork, there are many Generative AI tools, but my favorite one is the vanilla Stable Diffusion. Since it is open source, an ecosystem of tools and techniques have sprouted around it. With it, you can train your own model, fine-tune existing models, or use countless other models trained and hosted by others.

But one of my favorite use case is to render rough sketches into much prettier artwork. In this post we will see how to setup real-time rendering so that we have an interactive drawing experience. See below to see how quickly we can come up with a decent painting.

In 2020, I wrote about GPT-3 model. Late last year, OpenAI released ChatGPT which was based on GPT-3 but trained using Reinforcement Learning from Human Feedback (RLHF). And now GPT-4 has been released. It has only been out for a few days, but it is already seeing incredible applications such as creating office documents, turning sketches into functional apps, creating personal tutors, and more.

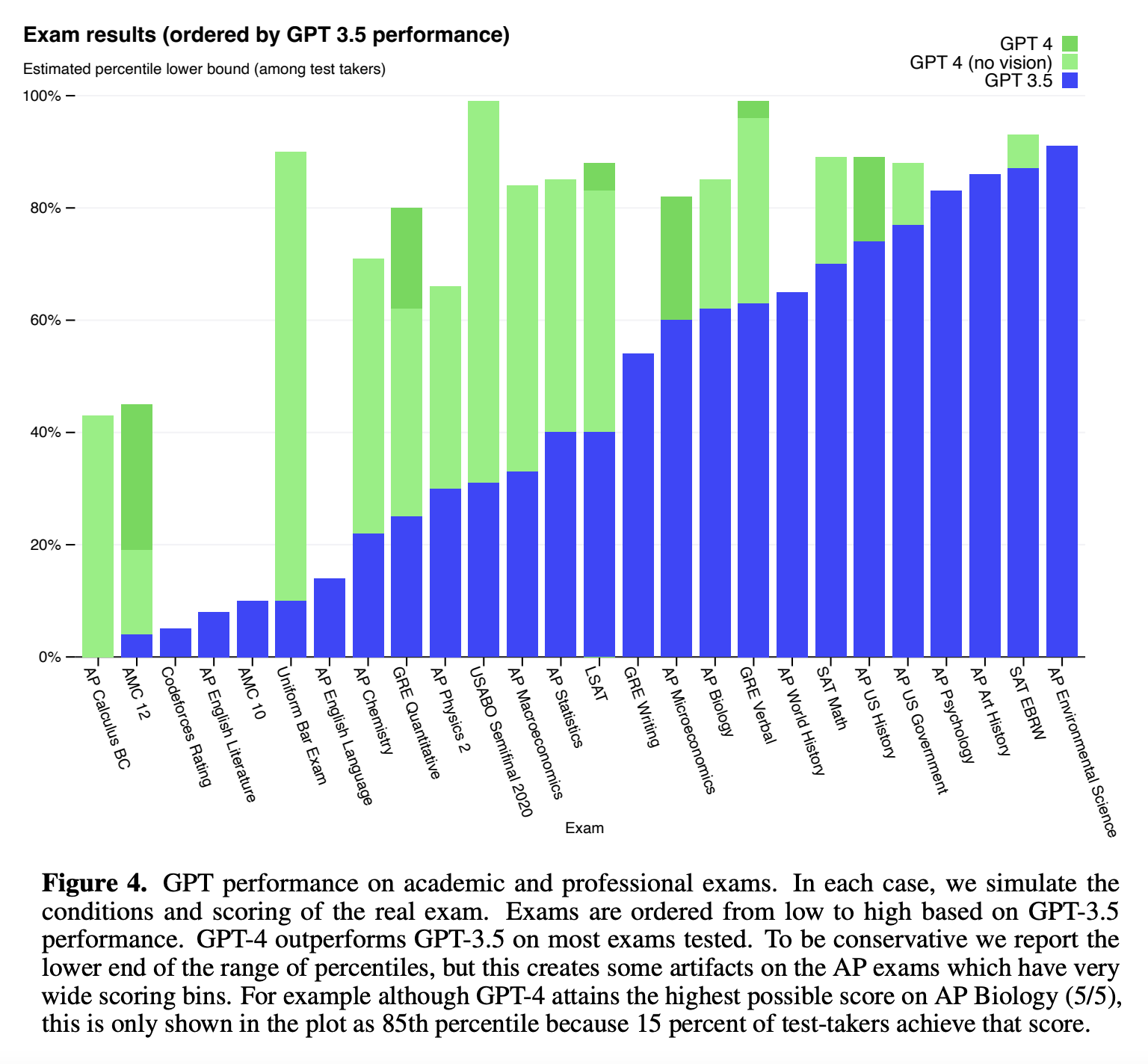

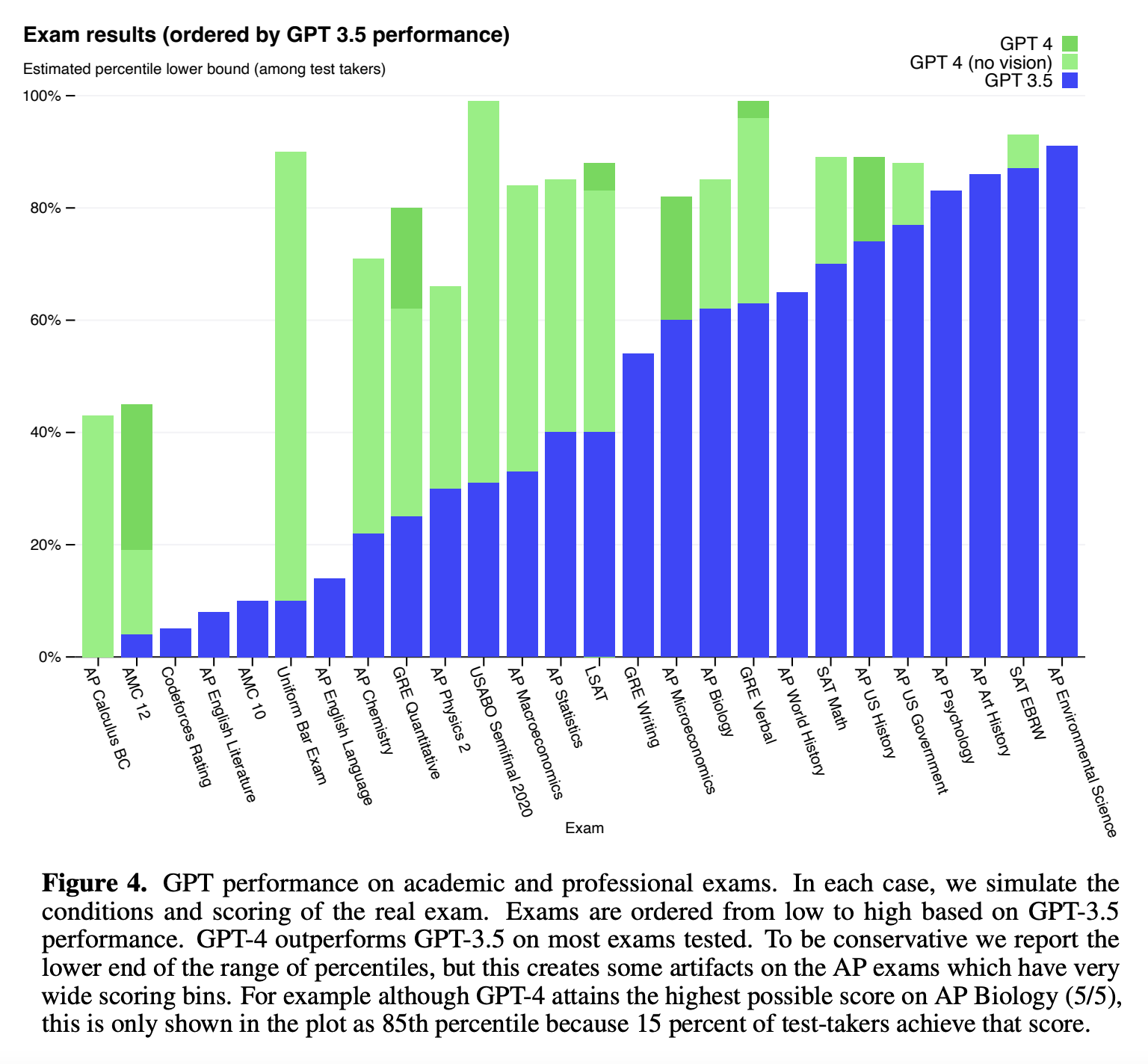

The newly released GPT-4 exhibits human-level performance on a variety of common and professional academic exams. Source:

OpenAI GPT-4 Technical Report

The newly released GPT-4 exhibits human-level performance on a variety of common and professional academic exams. Source:

OpenAI GPT-4 Technical Report

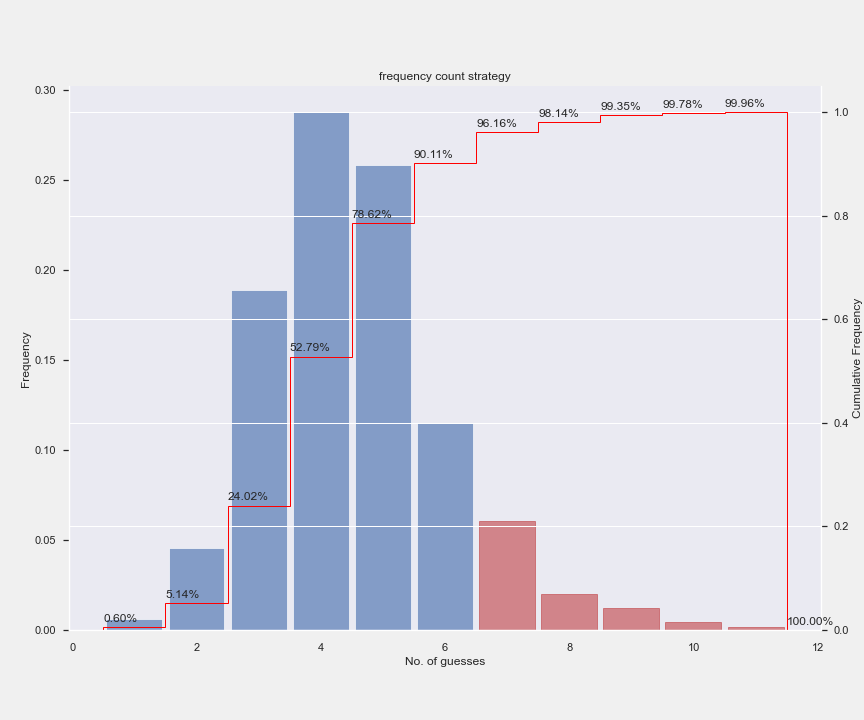

Wordle is a web-based word game which has become incredibly popular during the pandemic. It became so popular over a while that it was even bought by New York times for a significant sum and is currently hosted there. The game is a lot of fun to solve manually, but I am also interested in solving this computationally. This is my attempt at coming up with a solution strategy for the game.

In July 2021, AWS and Hugging Face announced collaboration to make Hugging Face a first party framework within SageMaker. Earlier, you had to use PyTorch container and install packages manually to do this. With the new Hugging Face Deep Learning Containers (DLC) availabe in Amazon SageMaker, the process of training and deploying models is greatly simplified.

In this post, we will go through a high level overview of Hugging Face Transformers library before looking at how to use the newly announced Hugging Face DLCs within Sagemaker.

Firefox, like other modern browsers, has an excellent in-built JSON viewer. It also supports Data URIs which allows you to load HTML resource from text in URL as if they were external resources. We can make use of these two features to have a handy JSON previewer which can be invoked from command line.

For example, when you enter the below link into your browser, it opens a “Hello world” text document.

data:,Hello%2C%20World!

This content is not limited to plain text. It can even be an HTML document:

data:text/html,%3Ch1%3EHello%2C%20World!%3C%2Fh1%3E